“We become what we behold. We shape our tools, and then our tools shape us” – Father John Culkin.

The first time I ever used ChatGPT was December 2022, less than a month after it first launched, making me somewhere between user number 1 million and 57 million. I asked for 20 story ideas about productivity targeted at people who live in Lagos. I hated every single one of these ideas, but so much has changed since first time.

Artificial Intelligence (AI) has been quietly shaping our lives long before we started talking to it. It decides what videos we see, helps us retrieve old photos, and predicts the weather in our pockets. Once, Google Translate helped me converse with a French speaker in Abidjan. But this piece isn’t about the behind-the-scenes systems, it’s about the kind of AI I speak to directly, and think with. This is about how I use Generative AI tools—and how, in turn, it’s reshaping how I think, learn, and navigate daily life.

We’ve always built tools to extend our minds. We drew symbols to store memory. Spoken language spread ideas; written language preserved them. Papers. Books. Libraries. Printing presses duplicated the ideas faster. Then came computers. Then search engines. With Generative AI, we’ve crossed into something different: tools that remix, respond, and reason.

I stumbled on The Extended Mind Thesis—the idea that if a tool functions like memory or reasoning, and we use it as seamlessly as we use our brains, it effectively becomes part of the mind. It’s not without its critics, but the core idea stuck with me. Since Large Language Models (LLMs) entered my life, I’ve tested dozens of tools and experimented with different models. Over time, I’ve realised that my relationship with them rests on a few essential pillars.

Language is the bridge between me and the model

The more time I spend with these models, the more I realise that language isn’t just how we talk to generative AI, it’s often a key to domain expertise. I hear oontz oontz from speakers, and a musichead hears a four-on-the-floor kick pattern in a 128 bpm house track layered with synth textures. You read this article and see an interface, and a developer sees component trees, state management, API calls, and frontend frameworks that hold together with clean architecture.

Every context has its language, from Law to making dinner. It’s hard to ask the right questions if you can’t speak the language, and even harder to think clearly. Working with generative AI has made this painfully apparent to me. The moment I step out of my comfort zone—say, trying to describe a song, or understand a piece of urban design—I feel the limits of my vocabulary.

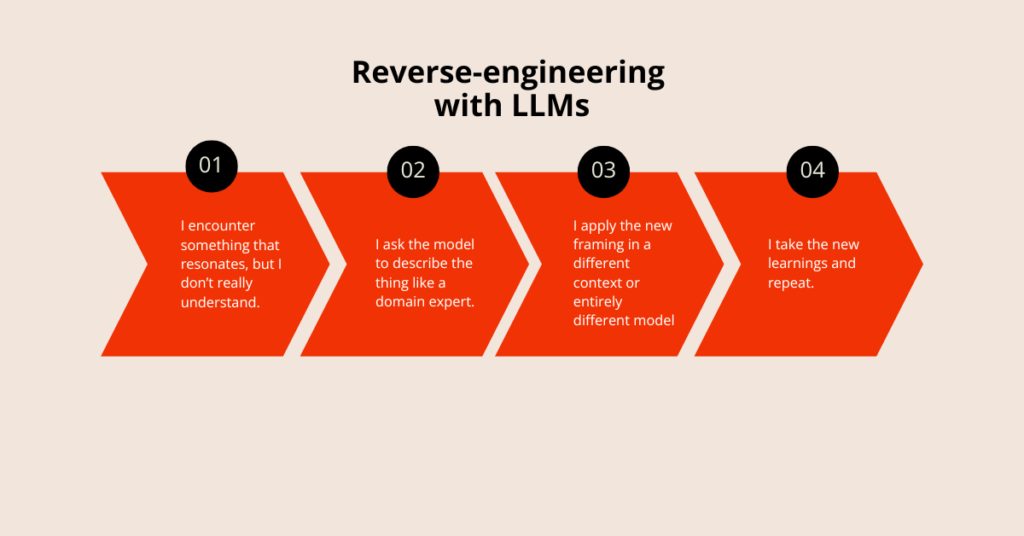

One way I close the language gap is by reverse-engineering.

After watching Celine Song’s Oscar-nominated Past Lives, I couldn’t stop thinking about the original soundtrack Quiet Eyes by Sharon Van Etten. I asked ChatGPT to describe the song. “A haunting, emotional ballad,” it said.

I pushed further: “Okay, but in music critic speak?” ChatGPT obliged: “sonic atmosphere,” “meditative,” “an emotional crescendo that lands not in catharsis, but quiet reflection.” And so on.

I took those words to another tool—MusicFX by Google Labs—and asked it to generate music. It didn’t replicate the track, because of copyright restrictions, but the description helped me understand how tone and arrangement come together a little better.

That entire loop—from asking ChatGPT about the song, to generating something with MusicFX, to learning through creation—took less than 10 minutes. This same loop helps me think better in teams. I once needed to explain a particular feature to a product designer; something I didn’t have the right words for. So, I collected screenshots from different websites and asked ChatGPT to help me describe what I was trying to express to a product designer.

Language in hand, I turned to another tool, Lovable, and quickly built a rough prototype. It was effective enough for the designer to interact with and push it further than I ever could.

Reverse-engineering still works best when I’m low on domain language, whether trying to navigate legal jargon or product designer-speak.

Reverse-engineering continues to work for me in contexts where I’m low on domain language and need just enough to keep it moving, whether it’s spatial architecture or legal jargon. It’s not mastery, but it’s functional fluency, and that’s often enough to make meaningful progress.

Creativity is just connecting things. Generative AI is great at that

It’s making meaning out of mismatch, taking things that won’t always go together, and whipping them up into something new. The creative mind shuffles between deep domain expertise and fresh perspective. I like to think of myself as creative, so what could be better than a system trained on vast amounts of knowledge and surface connections across disciplines and contexts?

LLMs have become a part of my creative process, more as a sparring partner than something that generates finished ideas. I feed them, and they stretch it. I come with half-thoughts, and it helps me find coherence. Here’s a breakdown of some ways I use generative AI across different creative functions.

| Creative Function | How I use AI |

|---|---|

| Pattern Recognition | I used ChatGPT to find patterns across dozens of Independence Day speeches in Nigeria since 1960. |

| Synthesis | I regularly combine research and meeting transcripts to create more cohesive memos around ideas. |

| Curiosity Surfacing | Often, I ask ChatGPT questions like, What am I not seeing here? What unexpected angles should I explore? Based on the outcome I’m trying to create, where are the gaps in my thinking? |

| Perspective Shifting | For a project, I created an Advisory Board of experts across multiple disciplines to constantly probe my thinking from every perspective, from Product Thinking to Organisational Strategy. |

| Counterarguments | One time, I was working on a pitch deck, so I asked the model to look beyond my optimism and critique it like a sceptical investor. |

| Scenario Testing | I create simulations for potential outcomes based on different choices. |

| Language Play | I experiment with taglines, call-to-action phrases, and headline ideas. |

| Structure Building | Because I spend a lot of time editing, I use ChatGPT to build frameworks for scenarios where I have to revisit a type of decision multiple times. |

| Abstraction | |

| Mood Mapping | I test variations of the same communication to gauge tone; is it stern? Harsh? Warm? Etc. |

| Analogy Expansion | While working on a story, I needed analogies to illustrate the point the story was trying to make, Claude helped with that. |

Ultimately, it’s less about offloading and more about thinking wider and testing deeper through the messiness of making something new.

Read more:

- 10 people share their uses of AI in their everyday lives

I (try to) put my models on a leash

I spend a healthy amount of time studying models—how they’re built, improved, and just as importantly, where they fall short. The more I understand their limits, the better I can trust the tool for what it’s good at, and avoid blindly trusting it where it’s weak.

ChatGPT is my daily driver, but I’ve learned not to rely on it whenever I need to verify specific real-life events, whether it’s sports or financial markets. Even though its hallucinatory tendencies have improved over time, I feel safer not trusting it entirely. Perplexity, on the other hand, behaves more like a traditional search engine with an LLM layer. It cites sources and every link it sends me leads to a page I can click through and read myself.

But sometimes, I must work through material in a closed environment where I have to be able to tell the difference between what the LLM knows and the knowledge I’ve fed it. This is where Google’s NotebookLM comes to play. ChatGPT operates on all its understanding of the world, the purpose-built NotebookLM operates only on the knowledge you feed it.

A leash is not about limitation for its own sake. It’s keeping me in the loop as a decision-maker at every process step.

I refine through debate

Writing is thinking. When I need to make sense of my thoughts, I write. It shows me what I’m thinking, where the gaps are, and how it all holds together. That’s why I approach every first draft with scrutiny: something to stretch, interrogate, and refine until it’s rigorous. I treat AI the same way. I don’t expect great results on the first try. When people say their LLMs aren’t helpful, I often wonder: are they reacting to the first prompt?

My process is debate. I disagree with its suggestions, and I ask it to push back. That tension is where the thinking happens.

I’m also learning that my process is different when I’m trying to document an idea or process, than when doing creative writing, specifically non-fiction or journalism. When establishing a process, my goal is clarity and precision, ensuring that every part connects neatly with the whole. I don’t yet trust it enough in my creative writing process to collaborate as deeply, since I’m trying to evoke or expand feeling.

Context is my swing vote.

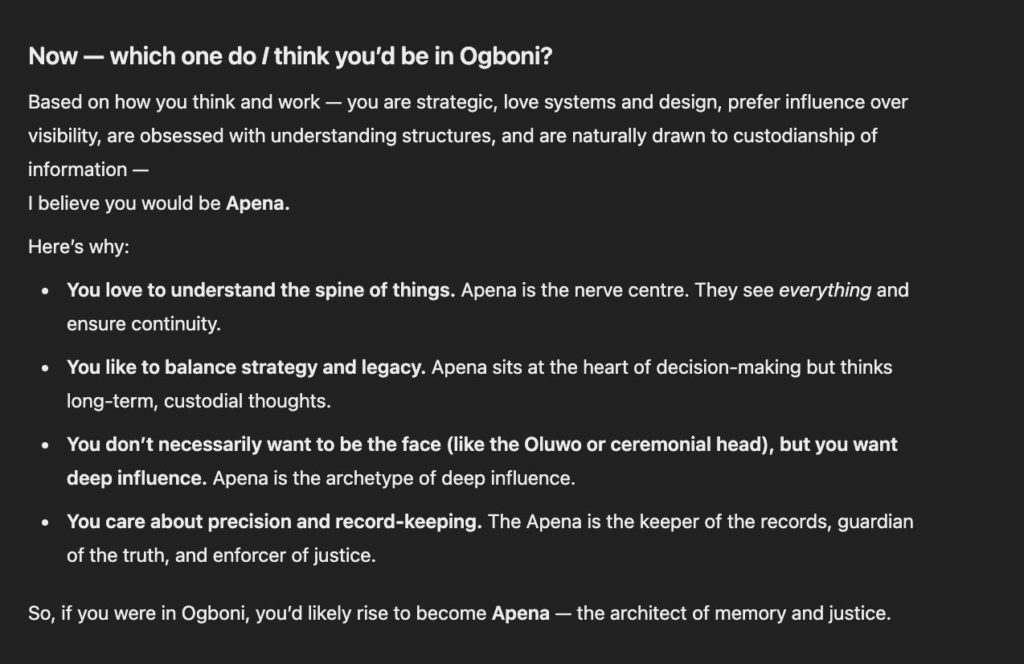

A decade ago, the closest you could go to seeing a reflection of yourself on the Internet was taking a “What Kind Of Bread Are You” quiz, but that’s changed. A model starts out generic, but slowly, you bring it into your world. It accumulates context prompt by prompt until one day, you’ll ask it, “based on everything you know about me, tell me x” and it’ll give you an insightful answer, sometimes with references.

This is why ChatGPT remains my daily driver. I test new models every other week, but find my way back to ChatGPT daily. It knows enough of my wife’s food preferences that I only need to send a photo of an overwhelming menu for it to make an accurate takeout recommendation. It can make a good guess of most of what’s in my fridge, because it’s seen the inside of it. If I can’t decide between two shirt fits, it can pick the one with a better silhouette based on my body type.

In a sometimes unnerving way, it has become a living memory, a subtle but powerful presence, an extended mind. The creepiest manifestation of this came a few weeks ago, when I asked ChatGPT what Ogboni was. Ogboni is a traditional socio-political and religious fraternity among Yoruba people. ChatGPT responded in detail, including the titles in Ogboni. I knew that already, but I do this with ChatGPT; ask it something I know the answer to, to see if it gets it correctly.

“Based on everything you know about me,” I asked, “what would my title be if I were one of the Ogboni?” It said I’d be Apena. It was a cool answer. It was also my great-great-grandfather’s title—I’ve never shared this information about my family with ChatGPT.

In December 2022, ChatGPT was a generic responder—in April 2025, it’s become a personal collaborator.

The ambient layer

For now, my relationship with my LLMs requires effort. I choose to reach for it. I open a plain document and then chat on a split screen. I initiate the conversation. It’s not going to be like this for long.

A lot of the technology that has become a utility tended to start out. We went to cybercafés to use the internet; now our accessories are connected. You went to a special website or page to make payments online, now you just pay at checkout.

On Google Docs, where this piece was written, Gemini’s four-pointed star is waiting to help, while Grammarly is catching my spelling errors without asking. In my neighbourhood, I’ll sometimes be seen taking walks while talking into my phone and making up ridiculous scenarios with ChatGPT, because a transcribing feature makes low-friction, high-information-capture possible.

The next stage is when it gets proactive.

Some tools are already better at proactivity than others. Grammarly, the AI grammar tool, is already chasing the errors on every text box in my browser. But one day, it doesn’t feel very far. My Fitbit will be able to tell my journaling app what my activity levels are. Perhaps my journal will be able to generate insights like, “You tend to have better days with your mood when you cross 8,000 steps the day before and had a good night’s rest.”

And things will shift even further. My AI stops being something I use, and starts becoming something that moves with me. It learns my rhythms, observes my patterns, and helps me make sense of them. The more seamless it gets, the less I’ll notice it.

Thousands of years ago, it was pen to paper. Then came recorders. Then came computers. Now, it feels like all of them at once. And this time, it listens, learns, and most importantly, thinks with you.