The economics of business growth are shifting. For decades, increasing revenue meant increasing headcount. When customer demand doubled, companies expanded support teams. When sales targets rose, recruiters filled more seats. Growth followed a linear path, with each step forward tied directly to hiring.

That model worked, but it came with predictable costs. Every new hire adds salary, benefits, onboarding time, management oversight, and a ramp-up period before reaching full productivity. Scaling operations meant scaling expenses. Today, organizations are beginning to decouple growth from headcount by deploying autonomous AI agents to handle repetitive, rules-based, and data-intensive tasks that once required additional staff.

What began as experimentation is quickly becoming an operational strategy. Businesses are increasing output, responsiveness, and efficiency without proportionally expanding their workforce. The old equation of grow revenue, grow headcount is no longer automatic. The organizations moving ahead are those that clearly define which work belongs to intelligent systems and which requires human judgment. Making that distinction is becoming a core leadership discipline rather than simply a technical upgrade.

What AI agents actually are, and why the confusion matters

AI agents are autonomous software systems that plan, reason, use tools, and execute multi-step workflows without continuous human prompting. They connect to CRMs, databases, email platforms, and internal systems through APIs and protocol layers like Anthropic’s Model Context Protocol (MCP) and Google’s Agent-to-Agent (A2A) protocol.

The technical distinction

This distinction matters because the term gets applied loosely. A chatbot responds to direct questions within a fixed script. A copilot, like Microsoft 365 Copilot or GitHub Copilot, assists a human who remains in control of each action. An agent operates toward a defined goal with delegated authority, deciding which tools to use, which data to access, and which steps to take.

The comparison below captures the structural differences:

| Feature | Rule-Based Automation (RPA) | Copilots | AI Agents |

| Decision logic | Fixed rules, if/then | Human-prompted suggestions | Goal-oriented reasoning |

| Error response | Stops or breaks | Flags the problem | Retries, finds workaround |

| Data handling | Structured inputs only | Structured + text prompts | Unstructured (emails, PDFs, voice) |

| Workflow scope | Single task | Task assistance | End-to-end multi-step |

| Human role | Operator | Director | Orchestrator |

Why clarity matters for adoption

The shift from copilots to agents mirrors a change in how businesses delegate work. A copilot requires a human operator for every action. An agent requires a human orchestrator who defines goals, sets guardrails, and reviews outcomes.

Google search data shows users still actively search for “AI agents vs chatbots” and “what are AI agents exactly.” The definitional clarity gap slows enterprise adoption. Leaders who understand the distinction deploy agents faster and see results sooner.

Customer support: where agents deliver the fastest ROI

Customer service is the most mature production use case for AI agents, and the economics explain why. When a company’s inbound queries double, it no longer needs to double its team. Gartner projects agents will handle 80% of common service queries without human intervention by 2029, a shift worth an estimated $80 billion in global savings.

Beyond FAQ bots: autonomous resolution agents

Modern support agents go beyond FAQ retrieval. They access CRM records, process refunds, update subscription tiers, troubleshoot technical issues by querying internal knowledge bases, and escalate to human representatives only when confidence drops below defined thresholds. One pharmaceutical company cut live agent reliance by 40% after deploying resolution agents that handled tier-one inquiries end-to-end.

Proactive intervention, not reactive ticket handling

Proactive agents add another layer. They monitor usage data, flag at-risk accounts based on engagement patterns, and trigger retention workflows, personalized outreach, discount offers, and check-in calls, before a customer signals intent to leave. The result is not a smaller support team. It is a support function that scales without hiring.

As Rachel Sinclair, Acquisitions Director at US Gold and Coin, explains, “AI should not replace human conversations in a high-trust industry like precious metals. It should handle routine inquiries so our team can focus on the thoughtful, high-value discussions that build long-term investor confidence.”

Sales and revenue operations: autonomous prospecting at scale

Outbound sales have traditionally required teams of sales development representatives to research prospects, craft personalized outreach, manage follow-up cadences, and qualify leads before passing them to account executives. Each SDR handles 50 to 80 accounts. Scaling the pipeline meant scaling headcount.

AI agents compress that entire process. A sales agent scrapes LinkedIn profiles, visits prospect websites, cross-references data against an Ideal Customer Profile, scores intent signals, and generates personalized outreach sequences, all without a human writing a single email. If a prospect opens a message but does not reply, the agent adjusts the follow-up. If engagement spikes, the agent escalates to a human closer with a full context brief.

From lead research to CRM hygiene

CRM hygiene, one of the most time-consuming and neglected tasks in sales operations, also shifts to agents. They update records after calls, log meeting notes, tag deal stages, and flag stalled opportunities. Sales teams spend less time on data entry and more time in conversations that close revenue.

Restructuring around human-agent units

Companies with 200-person SDR teams are already testing agent-led outbound programmes that match the same pipeline coverage with 50 humans and 30 agents. The question is not whether the model works. The question is how quickly sales leaders will restructure around it.

“Productivity is no longer measured by output per person, but by how effectively teams leverage AI agents. The leaders moving fastest are not adding headcount. They are designing systems where each specialist can deliver more with intelligent tools,” said Kos Chekanov, CEO of Artkai.

Finance and accounting: from invoices to anomaly detection

Back-office finance teams process invoices, reconcile payments, generate reports, chase overdue accounts, and flag expense anomalies. These are high-volume, rule-adjacent tasks with clear inputs and outputs, the exact profile where AI agents replace headcount growth most effectively.

Automating the procure-to-pay cycle

Autonomous invoicing agents match payments against purchase orders, follow up on overdue accounts through templated but context-aware communications, and flag discrepancies that exceed defined tolerance thresholds. Expense report agents scan submissions for policy violations, duplicate entries, and out-of-range amounts before routing to approvers.

Financial reporting agents pull data from ERP systems, consolidate across business units, and generate formatted reports on schedule, tasks that previously required dedicated analysts and significant hours during close periods.

Shifting from reconciliation to strategy

OpenAI’s CFO revealed that leading enterprises now staff back-office operations at a ratio of one human to five AI agents. The ratio signals where finance teams are heading: smaller core teams managing larger agent workforces that handle volume, while humans focus on judgment, strategy, and exception handling.

Finance teams have spent decades trapped in reconciliation cycles that consume a significant portion of their time. AI agents are beginning to break that loop by automating repetitive financial workflows. The leaders moving fastest are not automating one process at a time. They are deploying agents across the entire procure-to-pay chain and reallocating their teams toward analysis, forecasting, and decision-making that drives real business impact.

HR and onboarding: the digital employee manager

Employee onboarding involves document collection, system access provisioning, compliance training scheduling, benefits enrollment, and dozens of coordination tasks across IT, legal, and department leads. The process requires weeks of back-and-forth and multiple handoffs, each one a potential delay.

End-to-end onboarding automation

An onboarding agent manages the entire sequence. It sends document requests, tracks completion, provisions software accounts, schedules orientation sessions, assigns training modules, and follows up on outstanding items without a human coordinator managing the workflow manually.

Microsoft’s Ignite 2025 conference showcased HR agents who onboard new employees end-to-end: generating accounts, sending welcome information, and scheduling training by piecing together steps from enterprise systems. The company introduced infrastructure layers, Work IQ, Fabric IQ, and Foundry IQ, to give agents memory, real-time business data, and reliable knowledge bases for operating in enterprise environments.

Beyond onboarding: benefits and policy queries

Beyond onboarding, HR agents handle employee queries on benefits, PTO balances, policy clarifications, and payroll questions. They reduce the load on HR business partners, who can then focus on retention strategy, culture initiatives, and workforce planning rather than answering the same 50 questions every week.

As Logan Peranavan, CEO of TapestoDigital UK, explains, “Onboarding and HR admin break down at handoffs. AI agents bring consistency. They ensure nothing slips through, so teams can focus on people and performance instead of chasing paperwork.”

Marketing and content operations: the always-on campaign engine

Marketing teams face a content production bottleneck that compounds with every new channel, audience segment, and geographic market. Scaling content output has historically meant hiring more writers, designers, and campaign managers.

Restructuring the content supply chain

AI agents restructure the equation. Content agents draft, format, and schedule posts based on real-time trending data and performance analytics. Campaign agents monitor ad performance, reallocate budgets across channels based on conversion data, and generate A/B test variations. Analytics agents compile cross-platform performance reports and surface insights that would take a human analyst hours to assemble.

The content supply chain, from ideation to distribution to performance measurement, can now operate with a smaller core team directing a fleet of specialized agents, each handling one function well rather than one generalist handling everything inadequately.

Personalization at scale becomes feasible

Personalization at scale, long a marketing aspiration, becomes operationally feasible when agents manage the segmentation, content variation, delivery timing, and response tracking that make it work. A team of three marketers directing 10 specialized agents can execute campaigns that previously required a team of 15.

“Marketing teams have always been expected to deliver more without bigger budgets. AI agents make that sustainable. The real advantage is not just producing more content, but personalizing campaigns at a scale that would be impossible if every variation required manual effort,” said Brandy Hastings, SEO Strategist at SmartSites.

The governance question: who owns the agent?

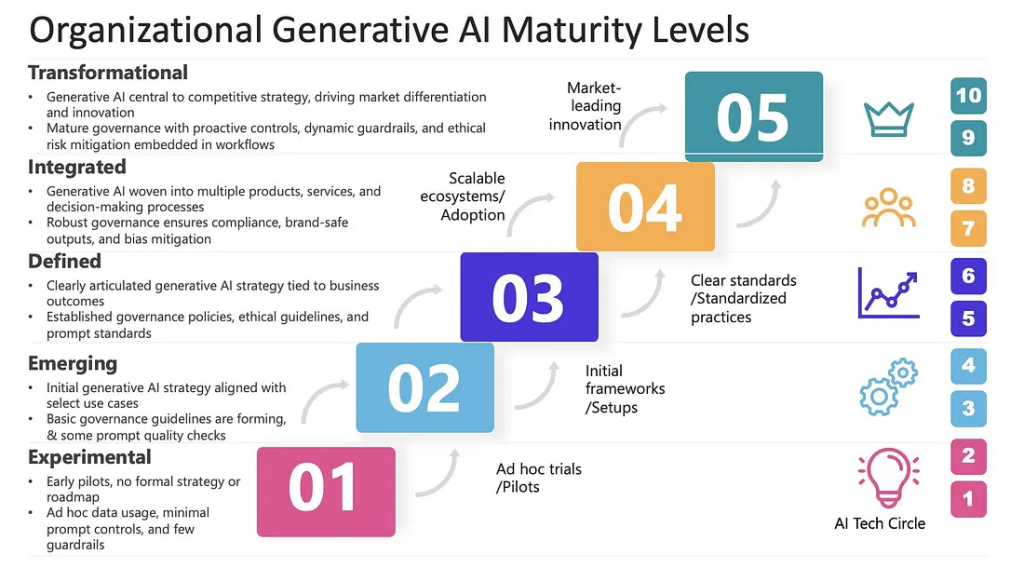

Scaling AI agents without governance frameworks creates a risk that can outpace the productivity gains.

The KPMG Q4 AI Pulse Survey found that 65% of business leaders cite agentic system complexity as the top barrier to scaling, a figure that held steady for two consecutive quarters. The challenge is not deploying a single agent. It is orchestrating dozens or hundreds of agents across departments, each with different permissions, data access levels, and decision-making authority.

“Value doesn’t come from isolated AI tools,” said Adrian Iorga, Founder of Stairhopper Movers. “The real impact happens when systems work together across scheduling, dispatch, and customer communication. That coordination is what drives measurable efficiency and consistent service quality.”

The three questions every board must answer

IBM frames agent identity as a board-level concern. As autonomous agents operate across enterprise systems, organizations must answer three questions: Do we know every AI agent that exists? Do we understand what it accesses? Are we confident in what it does when it reaches a system?

Leading teams embed privacy by design, segment sensitive data, ensure auditability across agent actions and tool calls, and establish continuity of user context across systems. These safeguards are becoming foundational infrastructure, not optional additions.

Defining ownership and accountability

Governance also means defining ownership. When a finance agent processes an incorrect refund or a sales agent sends an unauthorized discount to a prospect, accountability lands on a person, not a system. Organizations scaling agents successfully assign clear human owners for each agent workflow, with defined escalation paths and audit trails that make every agent action traceable to a decision, a permission, and an owner.

The governance gap is emerging as one of the biggest risks in agentic AI adoption. Many organizations are deploying agents faster than they are building the control systems needed to manage them. Every agent should have clear ownership, every action should be traceable, and governance frameworks must scale alongside deployment rather than being rebuilt after failures occur.

What goes wrong when agents scale too fast

The risks of agentic AI are proportional to the autonomy granted. More capable agents with broader system access create larger blast radii when they fail.

Hallucinations and trust erosion

Hallucinations remain the most visible failure mode. An agent that fabricates a customer record, generates an inaccurate financial report, or sends a misleading communication damages trust in ways that are difficult to reverse. CIO Magazine’s analysis of agentic deployments argues for “shattering monolithic agents into micro-specialists”, one agent, one task, because specialised agents produce fewer catastrophic failures than generalist agents trying to do everything.

Data security and permission models

Data security is the second major risk vector. Agents that access CRM data, financial records, employee information, and client communications must operate under strict permission models. Retrieval-Augmented Generation (RAG) architectures limit agents to accessing only the data relevant to their current task, rather than indexing or training on company-wide information.

Cost blindness and ROI concerns

Cost monitoring is a risk that catches organisations off guard. An inefficient agent consuming excessive API tokens can become more expensive than the human it replaced. Without tracking output per unit of compute, the emerging metric for agentic efficiency, companies lose visibility into whether their agents deliver value or burn budget. Forrester projects that 25% of planned AI spend will be deferred by 2027 due to ROI concerns. The firms that avoid that outcome are the ones measuring agent performance from day one.

The infrastructure gap

The tooling gap compounds these risks. SiliconANGLE research from January 2026 found that only 17% of AI platforms scored A- or better in agent design tools, and just 11% met that threshold for evaluation tools. The infrastructure to build, test, and monitor agents at scale has not caught up with the ambition to deploy them.

“When AI agents are connected to core systems like CRM, finance, or employee records, governance becomes critical,” said David Lee, Managing Director at Functional Skills. “Organizations must treat agent access with the same rigor as human access. In many cases, policies and training have not yet caught up with the technology.”

How to start: from pilot to production

McKinsey’s own trajectory provides a reference point. The firm started with 3,000 agents, reached 25,000 in under 18 months, and targets 40,000 by year-end 2026, with each employee paired with at least one agent. The path was not a single deployment event. It was a deliberate, phased expansion.

The phased approach appears consistently across every enterprise that has scaled agents successfully.

Phase 1: Identify the bottleneck

Find one high-volume, low-risk workflow where task completion correlates directly with headcount. FAQ responses, invoice processing, lead qualification, and employee onboarding are common starting points. Define success metrics before deployment: resolution rate, processing time, accuracy, and cost per transaction.

Phase 2: Deploy with a human in the loop

Start the agent on a subset of data. Connect it to one system, not all systems. Implement a review layer where the agent drafts actions and a human approves them. Track accuracy, edge cases, and failure modes. This phase builds institutional confidence and exposes integration gaps before they become production incidents.

Phase 3: Expand tool access and autonomy

As accuracy stabilizes, give the agent direct access to additional systems: Slack, Salesforce, databases, and scheduling tools. Reduce the human approval layer from every action to exception-only review. Monitor cost per transaction and output quality continuously.

Phase 4: Build the multi-agent ecosystem

Deploy specialized agents for different tasks within the same workflow. One agent identifies problems. A second resolves them. A third audits the resolution. Gartner forecasts that by 2027, 70% of multi-agent systems will contain agents with narrow, focused roles, not generalists. Humans shift from doing the work to orchestrating the agents that do the work.

“The proof-of-concept phase is over,” IBM stated in its 2026 AI goals framework. “The challenge is not whether agentic AI works. The challenge is whether you can deploy it reliably across your organization, at scale.”

Every agentic AI initiative should be tied to clear KPIs and a defensible ROI model before scaling. As Joern Meissner, Founder & Chairman of Manhattan Review, explains, “The real divide is not about access to AI. It is about execution. Some organizations experiment and stall. Others measure rigorously, scale deliberately, and quickly pull ahead.”

A common mistake organizations make is attempting to deploy AI agents across multiple departments at once. The teams seeing real success start with a single workflow, prove measurable value within a defined timeframe, and then expand gradually. Clear scoping and disciplined execution often determine whether an initiative remains a short-term pilot or evolves into a permanent operating model.

Image source: Medium

The workforce equation has changed

The narrative of replacement misses the structural shift. McKinsey grew client-facing roles by 25% while cutting back-office positions by 25%. The workforce is not shrinking uniformly. It is restructuring around a new division of labour: agents handle volume, humans handle judgment.

Restructuring, not replacement

Only 32% of AI use cases sit in production today, up from 15% a year ago. The gap between deployment ambition and operational maturity is still wide. But the direction of travel leaves little room for ambiguity. Enterprises that build the infrastructure to scale agents, governance layers, monitoring systems, identity management, and cost tracking will compound their advantage every quarter. Those who delay will find the gap increasingly difficult to close.

The competitive divide widens

The organizations scaling fastest in 2026 are not the ones deploying the most agents. They are the ones deploying agents with the clearest governance, the most well-defined metrics, and the strongest alignment between agent capability and business outcome.

For business leaders, the question is no longer whether AI agents can replace headcount. The question is which workflow agents should own, which decisions still require human judgment, and how quickly the organization can build the governance infrastructure to scale both.

The firms that answer those questions first will define the economics of the next decade. The rest will be hiring to keep up.