In July 2023, I decided to get serious about skincare. Like many people starting their skincare journey, I copied what I saw online and bought everything the Internet said I needed: a face wash, toner, vitamin C serum, moisturiser, and sunscreen, all without a skin analysis or consultation with a dermatologist.

A week later, my face broke out, and my “Internet dermatologists” said my skin was just adjusting to the routine. Two weeks and larger breakouts later, I gave up on the routine entirely. Looking back, the entire process was purely guesswork.

Oyster, a Nigerian startup building an AI-powered skincare platform, wants to eliminate how guesswork, influencer recommendations, or trial-and-error drive skincare decisions. The app uses a smartphone camera to analyse a user’s skin and recommend products based on that assessment.

Founded in August 2025 by Jude Chikezie, a former commercial director at Curacel AI, a YC-backed insurtech company, and a former owner of a beauty consulting firm that worked with skincare brands such as Nivea and Eucerin across Africa, Oyster emerged from a pattern he noticed while managing customer support operations for beauty retailers.

Oyster allows users to log their skincare routines and purchase recommended products directly through the platform, which connects to partner retailers.

To see how well that promise holds up, I downloaded the app on Friday, the 13th of March and scanned my face.

Scanning my face

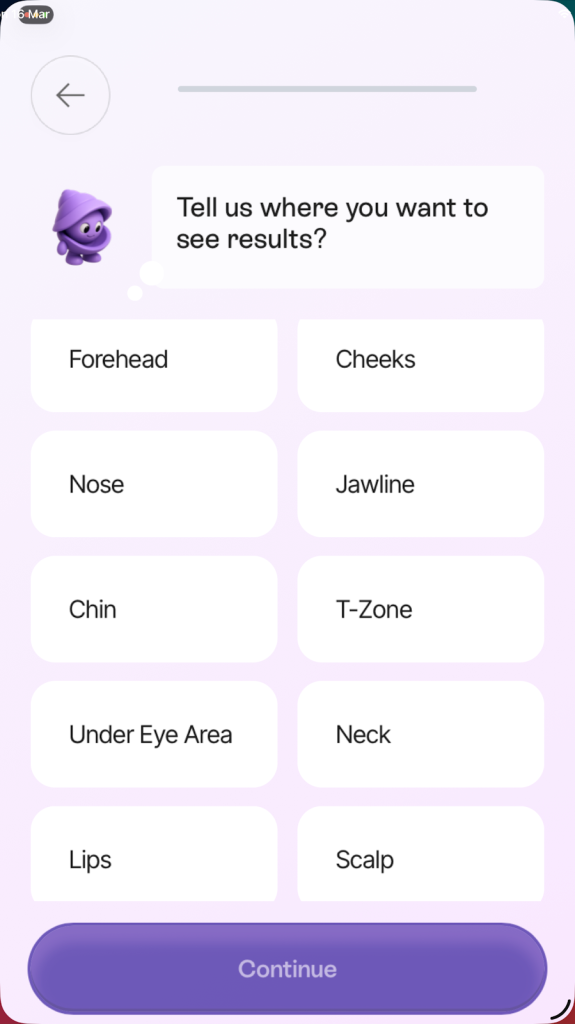

After downloading Oyster, the app began with a short onboarding process to understand my skincare experience. It asked questions about my allergies, skincare experience level, budget, and the skin concerns I wanted to address. The goal was to give the system some baseline information before the analysis began. Once I completed the onboarding process, the app prompted me to scan my face.

Oyster asked me to take three photos with my camera: one facing forward, one with my head slightly turned to the right, and another with my head slightly turned to the left. After capturing the images, the app began analysing the scan.

According to Chikezie, behind the interface, Oyster’s technology processes the images by dividing each one into four segments so that different parts of the face, including the forehead, cheeks, nose, jawline, chin, and the T-zone, can be examined simultaneously. Because the scan captures three photos, the system ends up analysing twelve image segments at once.

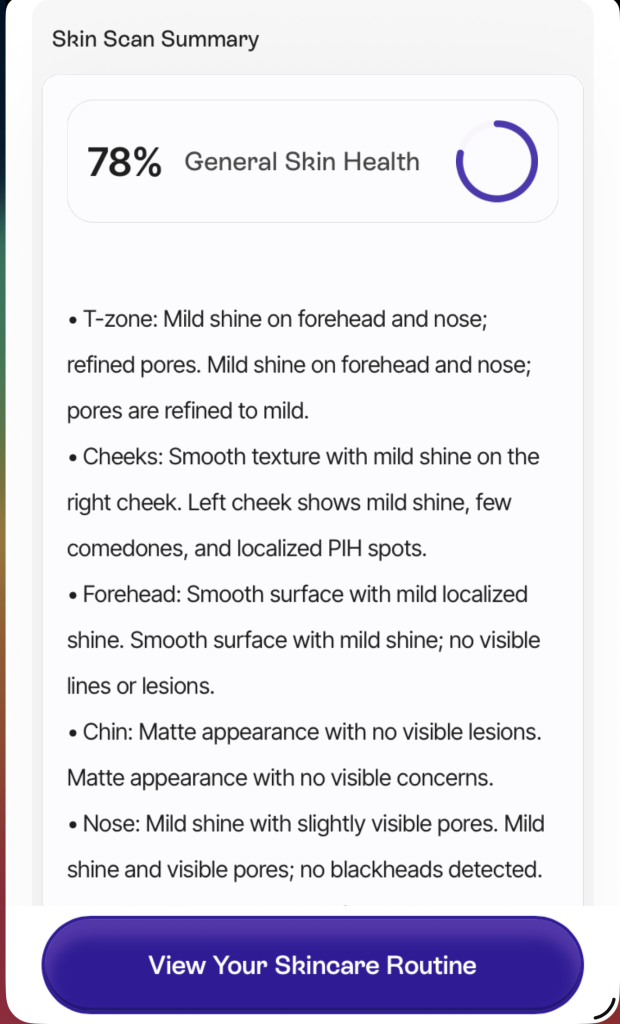

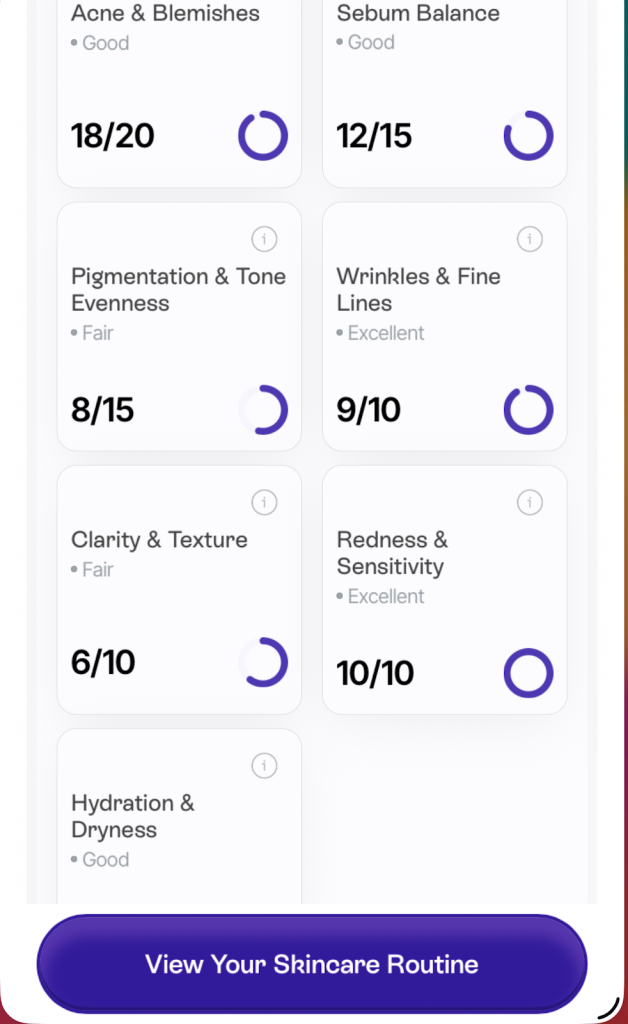

Within seconds of image analysis, the results appear as a skin health percentage score along with a breakdown of different skin metrics, including acne and blemishes, sebum balance, pigmentation and tone evenness, wrinkles and fine lines, skin clarity and texture, redness and sensitivity, and hydration or dryness.

“Previously, we tracked about eleven parameters. Now we track up to thirty-three different parameters across the skin,” Chikezie said when explaining the evolution of the system’s analysis capabilities.

He added that Oyster’s scoring system was derived from dermatological grading frameworks used in clinical practice, including the Global Acne Grading System (GAGS), a method dermatologists use to evaluate acne severity across different parts of the face.

Training the system to recognise those skin conditions required building datasets that represent a wide range of skin tones, according to Chikezie. The models, built through integration with Google’s Gemini AI model, were trained on the Fitzpatrick skin scale, a dermatological classification used to categorise skin tones from lighter to darker.

To assemble those datasets, Oyster said they worked with aestheticians, dermatologists, and beauty retailers who conduct consultations with customers to generate information about skin concerns and treatment recommendations. Chikezie noted that the company collected additional training signals from early product use, during which the tool recorded roughly 48,000 skin scans.

Those scans, he said, helped refine the model’s ability to identify skin conditions on darker skin tones.

In my case, the result of my face scan flagged several concerns, including hyperpigmentation, enlarged pores, fine lines, and some other concerns I would not be revealing. My skin analysis also highlighted areas where my skin performed well (which I will be fully revealing), including even tone, smooth texture, good radiance, even jawline texture, good plumpness, and no redness.

Once the analysis was completed, the app generated a personalised daily skincare routine based on the concerns detected during the scan and the preferences provided during onboarding. The app allows users to log their routines and track changes over time, which suggests Oyster is designed for repeated use rather than a one-off scan.

The app also connects this routine to a marketplace where I could purchase recommended products from its partner retailers. The app also includes an AI assistant that responds to skincare questions using information from my scan and profile.

How accurate is Oyster?

One thing I noticed while testing Oyster was that the analysis was not always identical across scans. Running the scan multiple times produced results that were similar but not identical, as some highlighted concerns appeared at slightly different intensities, and the overall skin score shifted between scans.

That inconsistency becomes more significant when the output is tied to action. In cases where the model misreads a condition, users may end up treating the wrong problem. Although positioning itself as a triage tool rather than a diagnostic system makes it out of sight for regulators, as it is not a medical device, the risk remains that users could rely on its recommendations in cases where professional medical advice is more appropriate.

Chikezie said the system attempts to control for several factors that can affect scan quality. Before analysing an image, Oyster runs what he described as an image quality validation stage, in which the software checks whether the face is properly centred or blurred, and whether the lighting conditions are adequate.

He pointed out that differences between natural daylight and indoor lighting can affect how pigmentation and shadows appear in images, as the system attempts to measure luminance levels and shadow distribution to reduce the risk of misreading shadows as skin conditions.

Beyond technical constraints, Oyster also sits in a grey area between skincare guidance and medical diagnosis. Chikezie said the company tries to draw that boundary through a scoring threshold.

“If your skin analysis falls below a certain threshold, the system prompts you to speak to a specialist,” he said, explaining that Oyster is meant to function as a triage tool rather than attempting to provide a full diagnosis in those cases.

To evaluate the system’s performance, Oyster compares its predictions with assessments made by aestheticians and dermatologists during consultations through a publicly visible internal evaluation system that measures how closely the model’s conclusions align with those of human specialists.

Oyster beyond the app

Oyster’s most visible product is its consumer-facing app. However, Chikezie says the company’s ambition is to become an intelligence layer for the beauty industry.

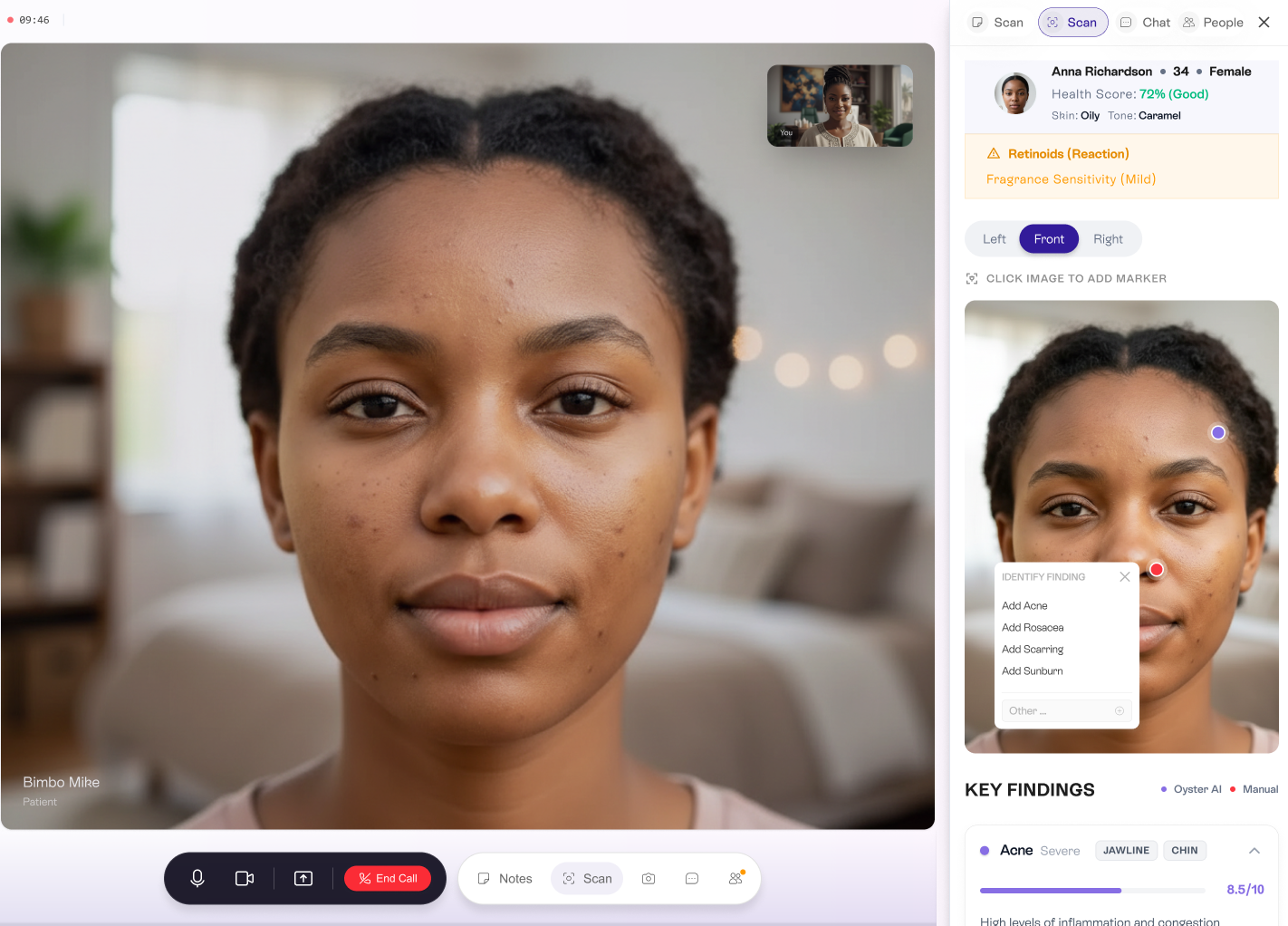

Oyster has developed a consultation system that allows aestheticians and dermatology clinics to run video consultations where the AI system analyses a patient’s skin in real time, highlighting potential concerns and automatically generating recommended routines after the session.

The company also provides software that retailers can embed on their websites so customers can analyse their skin and receive product recommendations before buying. In physical retail environments, Oyster is experimenting with hardware installations, such as smart mirrors, that allow customers to scan their skin in pharmacies or beauty stores and receive recommendations based on the store’s available products.

The infrastructure layer has become Oyster’s primary revenue engine, according to Chikezie. He said roughly 80% of the company’s revenue now comes from businesses rather than the consumer app.

He also noted that clinics and aestheticians pay to use Oyster’s consultation and analysis tools, with pricing varying by use case and contract. Some are charged per consultation, while others pay based on how many practitioners use the system. Retailers integrating Oyster’s technology into their websites or stores are also charged based on usage.

Oyster takes a commission on purchases made by users on the platform.

Oyster is operating in a market that is already being catered to by less structured alternatives. Some skincare users already rely on social media creators to interpret skin conditions and make skincare decisions. General-purpose AI tools from larger technology companies have also begun to incorporate image-based analysis features and can respond to prompts such as “what’s wrong with my skin?” or “what products should I use?”, offering similar guidance without being built specifically for skincare.

The difference Oyster is trying to establish is in how it combines analysis with recommendations and product discovery. Whether that integration is enough to distinguish it from existing tools remains to be seen, as AI models from large companies continue to improve their ability to analyse images.

Oyster’s future in Africa’s beauty industry, projected to pass $10 billion by 2030, will depend on the adoption of its infrastructure across markets. If that works, it could become an integral part of how skincare decisions are made across the industry, and if it doesn’t, its features could become just another medium of skincare advice provided by social media influencers and general-purpose AI tools.